I have to thank Vincent Kars for linking me to Jim LeSurf's site a while back. In it I found many interesting pieces of information among which was the IQ-test that Vincent linked me to http://www.audiomisc.co.uk/Linux/Sound3/TimeForChange.html.

In summary, the IQ-test is Jim's replacement for the ubiquitous J-test, which is fine for SPDIF signals but mis-applied when testing USB audio signals). In other words Jim found that the J-Test was inappropriate for testing USB audio signals as it was designed for exposing the flaws in SPDIF signalling. His IQ-test was able to uncover the digital "wow & flutter" of USB audio i.e differences in timing over a longer analysis period than the usual analysis period that is used for testing jitter. I will come back to this in a minute & ask some questions about it. But first some background:

The explanation of what the IQ-test does & the thinking behind it is given here: http://www.audiomisc.co.uk/Linux/Sound3/TheIQTest.html

The source code for anyone wishing to run the test is given here: http://www.audiomisc.co.uk/software/index.html scroll down to near the bottom

IQ-test results for the DACMagic & Halide Bridge are given here http://www.audiomisc.co.uk/Linux/Sound3/TimeForChange.html and also an explanation for the test setup.

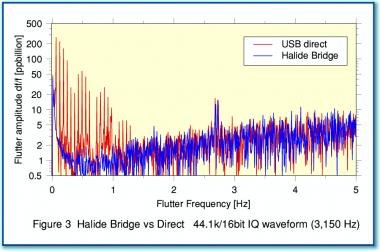

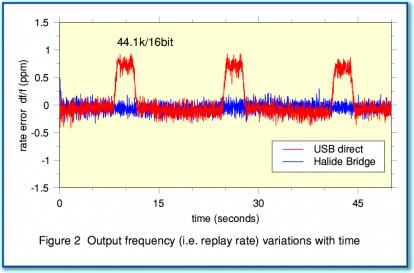

From that first IQ-test the following graphs are generated: This IQ-test is a test at the analogue outs of the DACmagic when fed audio via it's native adaptive USB input Vs the asynchronous USB Halide Bridge feeding SPDIF into the DACMagic

None of this is terribly surprising, perhaps, when comparing adaptive USB to asynchronous USB. However the extent of the difference on the analogue outs of the DACMagic is probably surprising given the ASRC that it uses - "Our unique ATF2 (Adaptive Time Filtering) upsampling technology, developed in conjunction with Anagram Technologies, Switzerland." This may give rise to the answer - well that low frequency jitter seen in the IQ-test will get through any ASRC because it's below most if not all rate estimator's cut-off frequency.

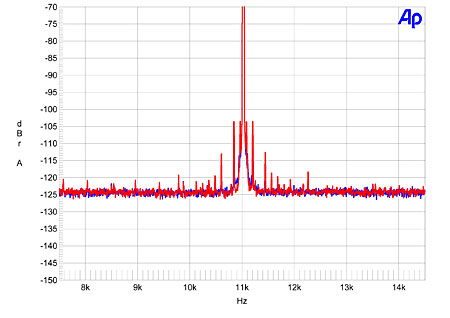

Feel free to discuss this point if wished but here's the bit I'm most interested in asking about. Given that we have FFT analysis on Stereophile of the DACMagic jitter at it's analogue outs, why is this gross level of jitter not showing up in the FFTs?

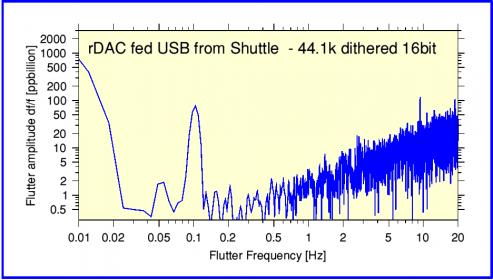

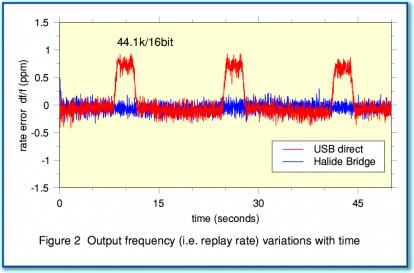

By gross level jitter, I mean that in that IQ-test graph the timing jumps by 1micro Sec & stays there for a duration of 2 secs before dropping back to the original correct timing. This pattern is repeated every 10 seconds. Each individual jump is not big but when you translate this into jitter figures it is significant - according to LeSurf's analysis of the 48KHz test which shows a jump of 8 micro secs "Here the rate jumps down about 8 ppm for around 2 seconds at a time. Now an 8 ppm change in rate accumulates to a timing error of 16 microseconds over two seconds. i.e. a ‘jitter’ over this period of 16 million picoseconds! This is many orders of magnitude greater than the kinds of values reported for J-Test measurements on shorter timescales!"

When I considered why this gross jitter level would not show up as distortion on a standard audio FFT, I initially thought that it would be seen as a flattening of the central peak as it would be very close in jitter - much like very close-in phase noise shows as a widening of the base of the carrier frequency spur in an FFT.

Then I considered & chatted & thought that a standard FFT as used in audio (Stereophile) would probably not pick up this distortion anyway because of the construction of the test. Am I right in thinking that typically, for 16/44 samplerates using 1KHz test tone, an analysis of about 1 sec of audio is all that's considered necessary for "full FFT analysis". This may be repeated 4, 8, 16 or 32 times for averaging by an AP. This 1 sec sample window would likely miss the jump in timing that occurs every 10 secs. Even if the FFT sample window was 10 secs would it actually show the resulting distortions in it's plot? To my simplistic understanding of FFT operation, it will treat random events (this jump) as noise & it will become buried in the grass at the bottom of the FFT plot.

Any experts wish to correct or discuss my musings?

In summary, the IQ-test is Jim's replacement for the ubiquitous J-test, which is fine for SPDIF signals but mis-applied when testing USB audio signals). In other words Jim found that the J-Test was inappropriate for testing USB audio signals as it was designed for exposing the flaws in SPDIF signalling. His IQ-test was able to uncover the digital "wow & flutter" of USB audio i.e differences in timing over a longer analysis period than the usual analysis period that is used for testing jitter. I will come back to this in a minute & ask some questions about it. But first some background:

The explanation of what the IQ-test does & the thinking behind it is given here: http://www.audiomisc.co.uk/Linux/Sound3/TheIQTest.html

The source code for anyone wishing to run the test is given here: http://www.audiomisc.co.uk/software/index.html scroll down to near the bottom

IQ-test results for the DACMagic & Halide Bridge are given here http://www.audiomisc.co.uk/Linux/Sound3/TimeForChange.html and also an explanation for the test setup.

From that first IQ-test the following graphs are generated: This IQ-test is a test at the analogue outs of the DACmagic when fed audio via it's native adaptive USB input Vs the asynchronous USB Halide Bridge feeding SPDIF into the DACMagic

The blue line shows the results for USB via the Halide Bridge. The red line shows the results for a direct USB connection into the DACMagic. In this case the test was using ‘CD standard’ LPCM data – i.e. a 44·1k sample rate using 16 bit values. By comparing the two replay methods you can clearly see that the direct USB connection produces periodic ’jumps’ in the replay speed. These don’t occur when sending the data via the Halide Design USB-SPDIF Bridge. The Bridge produces a much smoother and more regular replay of the data.

None of this is terribly surprising, perhaps, when comparing adaptive USB to asynchronous USB. However the extent of the difference on the analogue outs of the DACMagic is probably surprising given the ASRC that it uses - "Our unique ATF2 (Adaptive Time Filtering) upsampling technology, developed in conjunction with Anagram Technologies, Switzerland." This may give rise to the answer - well that low frequency jitter seen in the IQ-test will get through any ASRC because it's below most if not all rate estimator's cut-off frequency.

Feel free to discuss this point if wished but here's the bit I'm most interested in asking about. Given that we have FFT analysis on Stereophile of the DACMagic jitter at it's analogue outs, why is this gross level of jitter not showing up in the FFTs?

By gross level jitter, I mean that in that IQ-test graph the timing jumps by 1micro Sec & stays there for a duration of 2 secs before dropping back to the original correct timing. This pattern is repeated every 10 seconds. Each individual jump is not big but when you translate this into jitter figures it is significant - according to LeSurf's analysis of the 48KHz test which shows a jump of 8 micro secs "Here the rate jumps down about 8 ppm for around 2 seconds at a time. Now an 8 ppm change in rate accumulates to a timing error of 16 microseconds over two seconds. i.e. a ‘jitter’ over this period of 16 million picoseconds! This is many orders of magnitude greater than the kinds of values reported for J-Test measurements on shorter timescales!"

When I considered why this gross jitter level would not show up as distortion on a standard audio FFT, I initially thought that it would be seen as a flattening of the central peak as it would be very close in jitter - much like very close-in phase noise shows as a widening of the base of the carrier frequency spur in an FFT.

Then I considered & chatted & thought that a standard FFT as used in audio (Stereophile) would probably not pick up this distortion anyway because of the construction of the test. Am I right in thinking that typically, for 16/44 samplerates using 1KHz test tone, an analysis of about 1 sec of audio is all that's considered necessary for "full FFT analysis". This may be repeated 4, 8, 16 or 32 times for averaging by an AP. This 1 sec sample window would likely miss the jump in timing that occurs every 10 secs. Even if the FFT sample window was 10 secs would it actually show the resulting distortions in it's plot? To my simplistic understanding of FFT operation, it will treat random events (this jump) as noise & it will become buried in the grass at the bottom of the FFT plot.

Any experts wish to correct or discuss my musings?

Last edited: