Redbook presents no compromises if every link of the digital production chain was executed impeccably.

I have to disagree with this, even if the digital chain is "mathematically perfect". The first problem is that I have never, ever heard an anti-aliasing filter that works transparently (regardless of the quality of the equipment) when there are only a couple of semi-tones bandwidth for it to perform it's required mathematical function. If we accept that a full "high fidelity" bandwidth goes to 20 KHz, then you only need to increase the pitch beyond that by approximately two semi-tones (or a whole tone) and you are at the absolute mathematical limit of the Nyquist frequency. That is insufficient bandwidth to play with in all of my experience if you really do want the digital result to sound like the analogue input from the microphones (or anything else analogue). The result is a slight hardening up of the sound, a slight loss of timing and less inaccurate reproduction of instrumental timbre, especially those of massed violins in large orchestras. The only "workaround" around - if one is forced to work at 44.1 KHz sampling rate - is to bandwidth limit the original source material such that there are around 4 to 5 semitones of bandwidth (or more) for the anti-aliasing filters to work with. This can be achieved for example, by specifying a lower cut-off frequency in the downsampling process when preparing a CD master, assuming the material was recorded at a higher sampling frequency to begin with.

This is also the reason why I find the biggest improvement in digital recording is achieved when moving from 44.1 KHz to 48 KHz. That step in my experience is much more significant than anything beyond that, including 48 KHz versus 96 KHz versus DSD, etc. I also agree with Dan Lavry that high sampling rates are, however, even more harmful. He and I both agree the ideal sampling rate would be around 60 KHz, however no such sample rate is used in any commercially available equipment so far as I am aware. Certainly beyond 96 KHz, there are more problems introduced than solved (ultrasonics, intermodulation distortions caused by ultrasonics, clock accuracy, etc). These issues might be smaller the better the equipment, but even so, the best equipment will still generally measure better at low sampling rates than very high ones, except of course in the aspect of recordable bandwidth. Even as things stand, I prefer 48 KHz even to 96 KHz -both are still slightly compromised but given there is no real benefit (in my opinion) to record beyond around 21.5 KHz , then 48 KHz is the best compromise as it affords sufficient audio bandwidth, enough room for the anti-aliasing filters to work with reasonable transparency and there are no issues with ultrasonics or high frequency clocks causing any problems.

Incidentally, I should point out that in my opinion, recordable bandwidth is of the least amount of concern when it comes to digital recording - the far bigger problems are having enough bandwidth in order to be able to utilise as near to transparent filter as possible, having extremely low jitter throughout the whole recording and reproduction process and to reduce the noise floor as much as possible.

When it comes to 16 bits, again I have problems with this. Whilst I fully accept that mathematically and thus in theory there is no need whatsoever for the final product to be anything more than 16 bits, the problem is that even such low level (16 bit) noise floors effect the music that we do hear at much higher levels. Even if you were to take a "24 bit" recording then truncate it (only to find that there was never any actual musical content from bit 17 onwards), this still holds true. For some reason - and I do not claim to have any explanation - digital noise floors at extraordinarily low levels still effect what we hear even at "normal" listening levels.

For example, let us take an example of a recording made at 24 bits that does not contain any musical content beyond 16 bits, but it DOES have a DIGITAL noise floor equal to or beyond, say, 20 bits across the entire 20 - 20 KHz spectrum. This could very typically happen if an old analogue release from the 1950s to 1980s is remastered for distribution as "high res" 24/48, 24/96, 24/88.4, 24/176.8 PCM or DSD, etc. Now I fully accept there will likely be nothing whatsoever on those tapes that will require more than 16 bits to successfully record in the digital domain (probably even less in some cases), yet the low-level digital noise floor is still changing the actual

playback sound of the digital copy versus the original analogue. This continues to occur unless the noise floor is as low as it is possible to achieve with modern electronics (I have not kept up with the latest ADC technology, but last time I checked it still wasn't as good as those of the best DACS - so I think were are still stuck at around 21 bits for ADC at the present time).

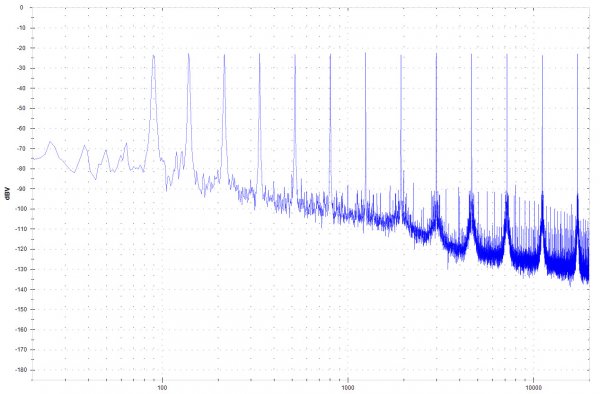

And it is quite easy to hear what the noise floor does to the music we do hear - you can take such a digital file as described above and add 16 bit noise shaping. By doing this, you are not making the slightest change whatsoever to the actual musical content, as all of that probably exists within the first 13 to 15 bits at best. All you are doing is making changes to the digital noise floor - often at levels well below -110 dBFS if using 16 bit noise shaping - so well beyond what any listener is ever going to hear (unless they wish to destroy both their hearing and their system). But what does happen is very consistent: where you have a higher digital noise floor, the less clarity you have, the more subjectively suppressed that particular frequency band sounds compared to other bands with a lower noise floor and the worse the 3D imaging is (sense of space around the instrument and the hall it is being played in).

As an example, if I choose the "low, optimum, B curve" profile using PSP X-Dither on a 24 bit file, I retain an excellent digital noise floor in the low to upper midrange. But I am adding a lot of noise (relatively speaking) to the low end, even though the total amount of noise added is 16 bits mathematically speaking. This results in poorer bass quality (muddier and more "boomy" sounding), a fantastic sounding midrange that sounds just like the analogue original, but also an exaggerated top end with an added sheen to it that wasn't there in the 24 bit original.

If on the other hand, I choose a reasonably flat dither to 16 bits instead, nothing I hear has the qualities of the 24 bit original - every frequency band is audibly compromised - it is just that those compromises are less because I am spreading them out across the entire frequency range. Sometimes this approach works better, sometimes another one does.

But for those who are sceptical, whilst you might want to dismiss my experiences because I am not a recording engineer, can you as easily dismiss the claims of audio engineers who work regularly with 24 bit masters and then spend a lot of time producing their CD masters, with particular attention paid to the dithering process. If 16 bits were enough, then they wouldn't have any need to be so obsessive with it - they could just pick anything they liked that was mathematically perfect at 16 bits and that would be fine. But of course these engineers do agonise over it because they hear what I do - the completely inaudible noise floor affecting the sonic quality of what we do hear, regardless of the actual musical content's "true" mathematical bit depth.

And the above is one of the things where digital theory does not pan out in actual practice. Because, yes, 16 bits is enough - I don't argue that when it comes to storing the actual musical content itself. But you need more than 16 bits because the inaudible noise floor affects the sound of the audible one. And the latter is something never mentioned in the theory books.