I'm not sure but that would be a good way to kick off the conversation. Do you have a good working definition for each? Perhaps we could all learn something new that hasn't been recycled over several years. It does appear to be a very critical parameter in achieving the ultimate levels of digital reproduction.

I will explain but I don't think it is the right way to look at the problem as in audio, the terminology is mixed up and don't follow the defined metrics that they are.

Audio as you know has multiple sampling rates. Perhaps less known is that even when a sampling rate is known, such as 44,100 it doesn't mean that is precisely how many samples are per second. You could get 44,099 in one second and 44,0101 in the next. Up to 5% is allowed. In addition, the incoming signal comes with noise and distorted waveforms which one needs to clean up.

The solution to these problems is a Phased Locked Loop or PLL for short. Simply put, there is a programmable oscillator that can be "told" to generate all the frequencies of interest. We start with one frequency and compare the "phase" (shift in time) of our oscillator against the incoming signal. If our frequency is different, we quickly get out of phase/sync. A phase difference detector is used to compare the incoming signal to what we are producing, creating an "error" signal that tells the oscillator what to do to achieve the same frequency as the input.

Here is a block diagram for a PLL:

As you see we have a loop where we continually perform the above analysis and correction. Loops can oscillate just like the feedback you may get from a mic to a speaker. Solution to that is to filter the response prior to the point where it causes oscillation. We can dial down the filter down further to filter out the noise from the incoming signal.

PLLs are used routinely in many circuits outside of audio. The formal characterization then is used in the context of those circuits rather than audio. In that regard, "Phase error" is the average difference between the incoming signal's phase and what we have generated in the PLL. Think of it as average delay. In audio this is not important but in digital circuits, having two circuits being out of phase can matter and result in the circuit doing something different than intended.

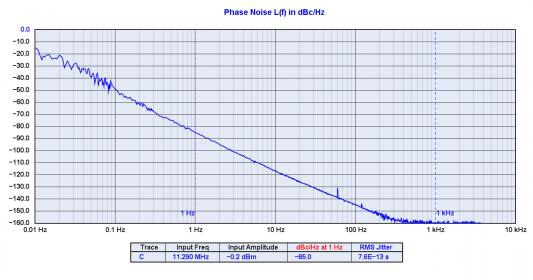

Likewise, Phase noise is the noise generated by the PLL variable frequency oscillator. That circuit is not perfect either and it will generate variations of its own. Phase error is measured in dbC/Hz. A simple conversion generates the familiar phase jitter which has units of time (seconds).

As I said at the outset, I don't think any of this is that important in the context of audio where phase error, jitter and phase noise are used almost interchangeably. I prefer to just see all up system jitter. It matters not what its source is. What matters and matters a ton is the spectrum of it.

We care about the spectrum because almost everything we know about psychoacoustics of such distortions is based on frequency domain. A bunch of audio samples jiggling back and forth ever so slightly tell us what is really going on.

The other thing that matters is the incoming signal, in our case music. The impact of variations is proportional to frequency. The faster the signal moves, the more it can be distorted if you jiggle its timing back and forth. For this reason the standard "J-Test" signal is 11 to 12 Khz. So if we have a subwoofer, we wouldn't care about the jitter as its frequencies are so low.

Back to the jitter spectrum, the higher its frequencies, the more damage it can cause. The reason is masking. When we have distortions that are much lower than the signal itself as is the case with audio jitter, the signal itself can mask the jitter distortions. You can't hear a whisper in a rock concert. Same here. But go to a quiet room and you can hear that whisper.

For these reasons, there is no simple answer of "it is audible or not audible." We can say that it is inaudible for vast swath of the population as these distortions are not familiar and hence not something people can easily pick out.

Personally, I would like to understand this enough to put a relative number to this phase error perhaps as it relates to timing differentials. In other words arrival times and how that would affect relative imaging in a two channel system. The constant shifting of time arrivals could be what we attribute the digital "edginess" that many complain about.

As I explained above, single number specs tell us nothing about its audibility.

We can however take a conservative stance and say, what level of jitter is below our single bit of resolution. That is, what level of jitter has the same value as the least significant bit in our audio sample. Assuming the highest frequency of interest is 20 Khz, this gives us a jitter spec of 500 picoseconds (500 trillionth of a second). Jitter specs above this may well be inaudible but require a type of analysis that is beyond what anyone would want to do. It is like putting an I-beam in a structure and be done with it instead of worrying about how thin of a wood framing could do the job.

Achieving 500 psec jitter is not hard and so that is another reason it is a good target to have. You can of course target more stringent values but for me, that is a simple, achievable minimum.