Actually, that is not correct.

It is actually

. I have managed the development of computers and software systems for 30 years. This is my professional opinion, not conjecture

. Let me know if anyone telling you otherwise has the credentials to know what they talking about.

I started the thread to clarify a question that occurred to me as to whether it was possible if jitter in data could be saved along with the data. I was reasonably sure the answer was no, but wanted some clarification and validation. It is my fault I then sidetracked the thread with the Ethernet question.

However, the HD question occurred to me in another discussion regarding whether or not different Ethernet cables can affect sound quality. My initial thought is no, but there is anecdotal data that it does, and AudioQuest and others are selling 'better' Ethernet cables for this issue.

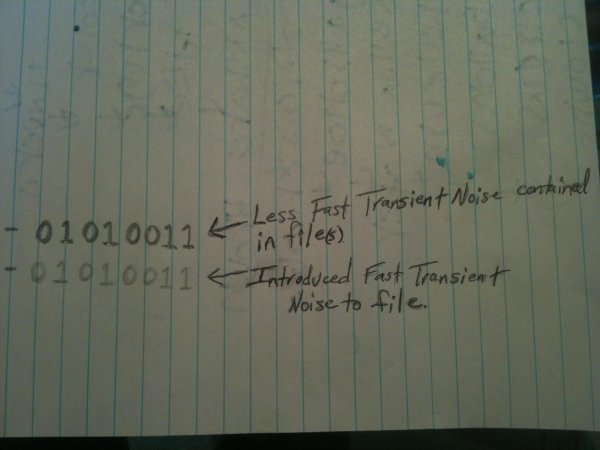

Answering this was the very purpose of my post. I explained that there is a possibility of changing cables and such impacting the noise and or clock signal out of the PC. That is the *only* way it can impact what you hear. It is *impossible* for it to be what you are saying, i.e. jitter being introduced in digital domain and accumulating.

The above is not like other matters in audio. If that fundamental aspect is not true, you are invalidating the entire field of digital design. It is not even subject to arguing let alone be true

.

I think everyone knows that our perceptions can manufacture huge amount of audible differences. I post a "review" recently where the person said cat5e and cat6 sounded different and the former was "grayer" sounding. Hundred bucks says the cat5e cable was gray and that is why he thought the analog output of the system was grayer

.

All I am trying to do is come up with some possible scenarios or reasons where this is technically possible, even if unlikely. Since an Ethernet cable, like any other cable, will attenuate the signal, or be subject to interference from outside sources, it certainly seems reasonable that the signal can be degraded enough to result in the receiver circuitry adding jitter as the musical data is reconstructed. The question is can this jitter make it to the DAC, or will it be eliminated as it travels through the rest of the circuit.

I gave you that "possible scenario." But you keep going to an *impossible* scenario. Why? Is that other explanation not satisfactory enough? And to repeat, the different cable may radiate the ethernet signal differently and that somehow bleeding into the DAC.

No one can *prove* to you that the above is impossible. But we can prove that your hypothesis is impossible. We are not giving you that detail because it requires being an electrical engineer to under. But trust me, this dog don't hunt. :

My assumption is, it depends. It depends on the topology used, and the ancillary equipment.

I doubt if people are imagining it. That is the argument used by cable deniers for every other cable. It wasn't true then, and I suspect it will not be true in this case, or at least in some implementations.

The moment we doubt that, then we are down a path that is not objective. No matter what kind of subjectivist you are, you have to allow some margin of error in your perception. Recall that I went down this path regardless in my post. I assumed there was a difference and provided a *theory* of where it could be. That is where you want to go with this. Not going after the jitter in the digital bits. That argument simply does not wash.