Wave Field Synthesis

- Thread starter Gregadd

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Isn't this what the soundbars use??

Rob

I don't think so. It's much more comprehensive.

Some high-end soundbars do use DSP in a similar approach. Probably not as comprehensive, nor as many drivers across such a large area, agreed! This is also at least partly the bsis for many room correction and effects algorithms.

Presumably the difference between this and binaural recording using headphones is that the listener can move around in the sound field - for all I know, it only takes small, unconscious movements of a listener's head to tell the difference between a sound field and a static, binaural recording/simulation. Would I be correct in thinking, though, that if the listener's exact position and orientation were known, the listener could move around in a simulated sound field using headphones? (A job for the ubiquitous Microsoft Kinect?)

From what I can tell, WFS does not address the fact that in live music at a venue, reflections of sound come from all directions in a 2 pi hemisphere. WFS seems to only claim to reconstruct the field along a single horizontal line in front of the listener. If this is the case, it is fatally flawed IMO.

Last edited:

From what I can tell, WFS does not address the fact that in live music at a venue, reflections of sound come from all directions in a 2 pi hemisphere. WFS seems to only claim to reconstruct the field along a single horizontal line in front of the listener. If this is the case, it is fatally flawed IMO.

Just a case of throwing (a lot) more speakers at it? You could do it for free with the headphone/position sensor solution though.

Physical fundamentals

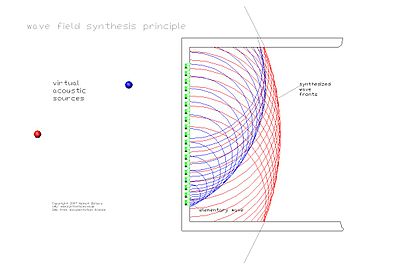

WFS is based on Huygens' Principle, which states that any wave front can be regarded as a superposition of elementary spherical waves. Therefore, any wave front can be synthesized from such elementary waves. In practice, a computer controls a large array of individual loudspeakers and actuates each one at exactly the time when the desired virtual wave front would pass through it.

The basic procedure was developed in 1988 by Professor Berkhout at the Delft University of Technology.[1] Its mathematical basis is the Kirchhoff-Helmholtz integral. It states that the sound pressure is completely determined within a volume free of sources, if sound pressure and velocity are determined in all points on its surface.

Therefore any sound field can be reconstructed, if sound pressure and acoustic velocity are restored on all points of the surface of its volume. This approach is the underlying principle of Holophony.

For reproduction, the entire surface of the volume would have to be covered with closely spaced monopole and dipole loudspeakers, each individually driven with its own signal. Moreover, the listening area would have to be anechoic, in order to comply with the source-free volume assumption. In practice, this is hardly feasible.

According to Rayleigh II the sound pressure is determined in each point of a half-space, if the sound pressure in each point of its dividing plane is known. Because our acoustic perception is most exact in the horizontal plane, practical approaches generally reduce the problem to a horizontal loudspeaker line, circle or rectangle around the listener.

The origin of the synthesized wave front can be in any point on the horizontal plane of the loudspeakers. It represents the virtual acoustic source, which hardly differs from a material acoustic source at the same position. Unlike conventional (stereo) reproduction, the virtual sources do not move along if the listener moves in the room. For sources behind the loudspeakers, the array will produce convex wave fronts. Sources in front of the speakers can be rendered by concave wave fronts that focus in the virtual source and diverge again. Hence the reproduction inside the volume is incomplete - it breaks down if the listener sits between speakers and inner source.

This explains it see cite in post#1

Last edited:

Just a case of throwing (a lot) more speakers at it? You could do it for free with the headphone/position sensor solution though.

People have spoken about that for over thirty years I'm aware of but nobody has ever demonstrated that they could get it to work. Stereo Review Magazine once even had a cover with a photo with "nearphones", multiple small speakers mounted around the head area of a rope swing. All types of sensors, accelerometers, etc to determine head rotation and positioning have been discussed but no practical solutions yet.

BTW, when you say a lot more speakers, how many are you talking about? 100? 200? 1000? 5000? How do you generate a sound field so diffuse that if you put your ear up to where it's coming from, the walls and the ceiling of the live venue, the intensity from any one angle is too small to be audible yet in aggregate it's almost all of what you hear?

"Because our acoustic perception is most exact in the horizontal plane, practical approaches generally reduce the problem to a horizontal loudspeaker line, circle or rectangle around the listener."

This directly disagrees with Beranek's 2008 paper in which he cites Furuya et al.;

"Furuya et al. [21]

found from extensive subjective measurements of listener

envelopment that late vertical energy and late energy from

behind, respectively, affect listener envelopment by approximately

40 and 60% of the lateral energy. It must be

concluded that total late energy is a better component of

LEV than late lateral energy. This finding is confirmed in

the study by Soulodre et al."

My own experiments confirm that vertical and energy from behind are critical factors in enhancement by reflected sound. This flies in the face of what audio equipment experts say. Many seem convinced that reflections off the ceiling should be avoided if at all possible.

People have spoken about that for over thirty years I'm aware of but nobody has ever demonstrated that they could get it to work. Stereo Review Magazine once even had a cover with a photo with "nearphones", multiple small speakers mounted around the head area of a rope swing. All types of sensors, accelerometers, etc to determine head rotation and positioning have been discussed but no practical solutions yet.

BTW, when you say a lot more speakers, how many are you talking about? 100? 200? 1000? 5000? How do you generate a sound field so diffuse that if you put your ear up to where it's coming from, the walls and the ceiling of the live venue, the intensity from any one angle is too small to be audible yet in aggregate it's almost all of what you hear?

Do you know what the major technical hurdle to the headphones-type simulation is? I would guess that latency would be quite detrimental to the illusion. As regards position sensors, well that field is improving all the time. As I understand it the Microsoft Kinect is quite something, and we now have MEMS chips in mobile phones that can detect position and orientation far better than could be done just a few years ago.

The alternative using arrays of loudspeakers must surely just be a question of cost. I remember a UK company called 1-Limited developing a 'digital' loudspeaker based on arrays of 'binary' piezo transducers which might be useful. Or something along the lines of the Quad ELS63 which apparently uses concentric electrodes and a delay line to simulate a point source behind the speaker. Does it have to be concentric electrodes? Could an array of electrodes be sequenced suitably for practical WFS?

Many seem convinced that reflections off the ceiling should be avoided if at all possible.

Hello, Soundminded. I can certainly vouch for that.

Tom

Do you know what the major technical hurdle to the headphones-type simulation is? I would guess that latency would be quite detrimental to the illusion. As regards position sensors, well that field is improving all the time. As I understand it the Microsoft Kinect is quite something, and we now have MEMS chips in mobile phones that can detect position and orientation far better than could be done just a few years ago.

The alternative using arrays of loudspeakers must surely just be a question of cost. I remember a UK company called 1-Limited developing a 'digital' loudspeaker based on arrays of 'binary' piezo transducers which might be useful. Or something along the lines of the Quad ELS63 which apparently uses concentric electrodes and a delay line to simulate a point source behind the speaker. Does it have to be concentric electrodes? Could an array of electrodes be sequenced suitably for practical WFS?

Yes if by latency you mean that the change to the signal to the earphones would lag behind moving your head. You'd need multiple binaural recordings made in the same spot at many angles and you'd have about 2 to 5 microseconds to catch up with them. I've read reports that differences in timing of sound reaching one ear before the other of that short a difference is detectable by the brain. It's part of the ability to determine the direction of sound, a very important tool for survival of higher animals including all mammals. IMO it's no accident that the organ that tells your brain the position of your head, your middle ear is directly adjacent to the organ that senses sound, the tympanic membrane. They work together.

Well, I'm thinking more upon the lines of synthesising a sound field rather than recording a real one, but it could conceivably be interpolated from recordings at multiple positions I suppose.You'd need multiple binaural recordings made in the same spot at many angles and you'd have about 2 to 5 microseconds to catch up with them.

I would see this as purely an experimental exercise - I can't imagine it being that much better than a conventional sound system, can you? And why go to the expense of perfectly replicating the audio without the visuals and other elements of the experience. I'd be curious to hear it, though.

Well, I'm thinking more upon the lines of synthesising a sound field rather than recording a real one, but it could conceivably be interpolated from recordings at multiple positions I suppose.

I would see this as purely an experimental exercise - I can't imagine it being that much better than a conventional sound system, can you? And why go to the expense of perfectly replicating the audio without the visuals and other elements of the experience. I'd be curious to hear it, though.

If it could be made to work perfectly it would be much better than any conventional sound system IMO. That's the problem. I don't see the engineering difficulties being overcome. Trying to "fudge it" with too few available signals and too slow a response would probably be awful. Depending on how it was implimented, there's also be a maximum range of head turning before the system reaches its limit. At that point the system would break down so you'd have to keep your head within a specific range of angular position.

Similar threads

- Replies

- 8

- Views

- 1K

- Replies

- 1

- Views

- 605

- Replies

- 3

- Views

- 1K

- Replies

- 0

- Views

- 459

Staff online

-

treitz3Super Moderator

Members online

- MarkusBarkus

- Phantom-Audio

- treitz3

- nuway

- sthekepat

- Johan K

- gleeds

- Koetsu123

- RSB

- SlapEcho

- five

- dennis h

- Jeffy

- lscangus

- puroagave

- davetheoilguy

- mtemur

- rt662

- Rdk777

- alwayslearning

- Ackcheng

- Dimfer

- Downtheline

- taww

- 2ndLiner

- Young Skywalker

- Tlay

- Channel1.0

- onur

- WildPhydeaux

- Mogulman

- QuantumWave

- trekpilot

- Holli82

- JGlacken

- ozy

- shawnf

- nkbg

- Mfisher702

- beach2mountain

- Willgolf

- F208Frank

- Ron Resnick

- markramler

- flkin

- Jpowell

- Jazzman53

- rthomeint

- jazzdude99

- Three quid

Total: 1,365 (members: 56, guests: 1,309)

| Steve Williams Site Founder | Site Owner | Administrator | Ron Resnick Site Co-Owner | Administrator | Julian (The Fixer) Website Build | Marketing Managersing |