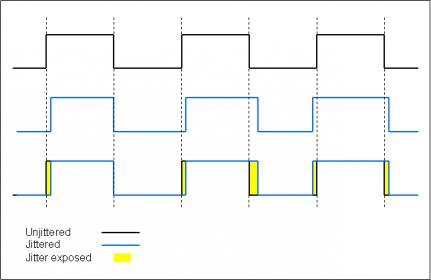

I have a question regarding jitter. Since jitter is being defined as a timing error as to when the leading or trailing edge of a digital signal occurs, then it would seem that as a byte with cable induced jitter is saved to a drive then that saved byte would now have the jitter.

For example, this diagram shows how the reconstructed byte has jitter. The new byte has bits that are not at the exact point they were when the byte originated. Since a digital music file is composed of numerous bytes, why doesn’t the jitter exist in the saved file since it obviously has jitter introduced? How can saving a file eliminate the timing errors introduced by the cable, or from other factors? It would seem that the system would just write the byte as it occurs to the drive. How would it know to correct the timing errors introduced into it?

The reason why I ask is some say jitter can be introduced by any link in the chain. Others are saying jitter is eliminated everytime the file is written either into RAM or onto the drive.

For example, this diagram shows how the reconstructed byte has jitter. The new byte has bits that are not at the exact point they were when the byte originated. Since a digital music file is composed of numerous bytes, why doesn’t the jitter exist in the saved file since it obviously has jitter introduced? How can saving a file eliminate the timing errors introduced by the cable, or from other factors? It would seem that the system would just write the byte as it occurs to the drive. How would it know to correct the timing errors introduced into it?

The reason why I ask is some say jitter can be introduced by any link in the chain. Others are saying jitter is eliminated everytime the file is written either into RAM or onto the drive.